The Misuse of Journal Impact Factors

Over the past year alone, I remember at least 3 separate occasions where various colleagues and I had discussed pooling data and otherwise complementary research results in order to put together a single, more comprehensive scientific story. What convinced people to collaborate was the chance to publish the work in a “journal with a higher impact factor” (and not really the chance to put together a better, more complete story). The other thorny but perhaps even more misguided sticking point – authorship position – is something worth tackling in a subsequent post.

In the various public sector hospitals where I have worked, there are tangible rewards for publishing in journals with higher impact factors, whether it be more conference funding, or better end of year performance assessments. In academia, publishing in high impact factor journals is thought to be important for promotion and tenure (since the criteria for these are not particularly clear or transparent to most academic staff). It is also used as a key performance indicator in assessing research grants.

The value of one’s research work, and even how one is viewed in the “pecking order” of clinical researchers, becomes bound by where – rather than what – one has published. The true impact of one’s work – which admittedly is far harder to measure (short of other metrics with their own shortcomings such as h- and i-indices) – becomes secondary. There is a creeping, somewhat misplaced, elitism where perhaps a close local analogy would be “prestigious” primary schools such as Nanyang or Rulang (think Nature, Science, NEJM, Lancet) vs. neighbourhood schools (think journals with impact factor <5.0) being used as a judge of the worth of a child.

Now, the primary problem here (there are many) is not with the journal impact factor (IF) per se – a derivation of the citation count of journal articles over a fixed time period, circulated widely since 1975 as a tool for academic libraries to determine which journals to subscribe to – but with its institutionalized use as a measure of the value of individual research (i.e. research is considered more impactful if the paper makes it into BMJ than SMJ), with consequent ramifications on career progression and rewards. This has led (as with the obsession with authorship positions) to gaming of the system by both researchers and journal editorial/management teams, a certain degree of perversion of the scientific process (when “novelty” becomes more important than scientific rigour or the real-world necessity of the particular scientific work), and delays in publication in the many cases where authors try their luck punting their work to journals with higher IFs (and suffering multiple rounds of rejection). I have personally been guilty of the last.

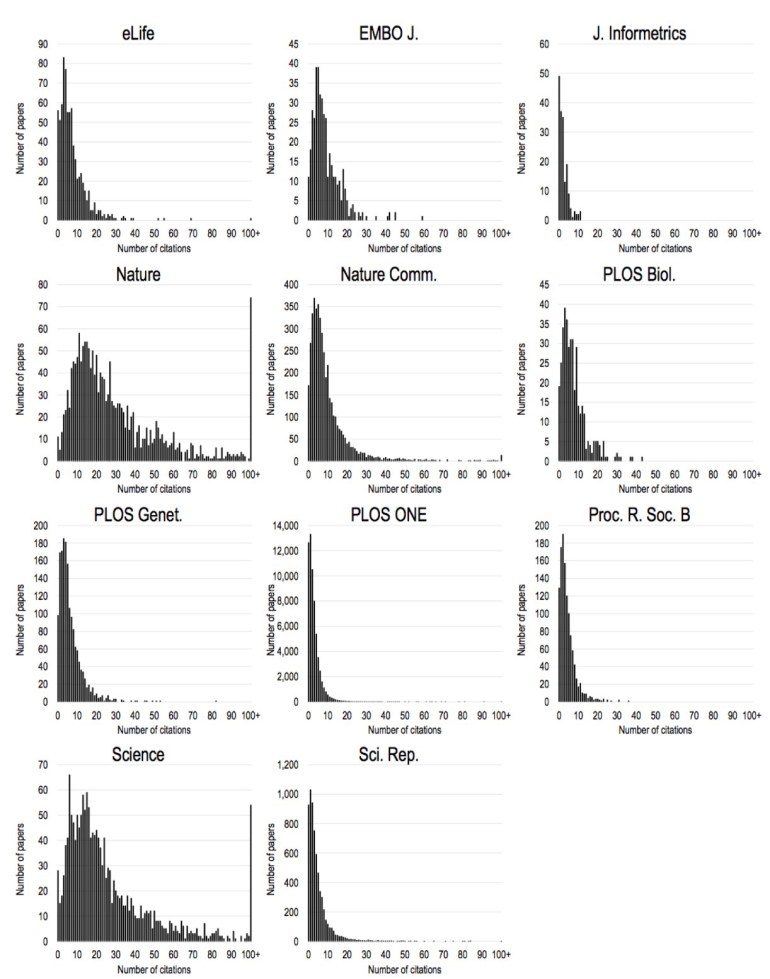

This problem has been recognized for years, and there have been various attempts to highlight and tackle the issue, most notably the San Francisco Declaration of Research Assessment (DORA) in 2013, and just a few months ago, a prepublication piece by prominent journal Editors in BioRxiv. This piece is particularly striking for showing (again) the long tail of citations – only 15-25% of each journal’s articles meet or exceed the journal’s IF and account for >50% of that journal’s citation count. It showed again that statistically, the worth of a piece of research work – even when measured narrowly in the form of citations – poorly corresponds with the IF of the journal it is published in.

It is well worth reading this old (2012) blog post by Prof Stephen Curry of Imperial College who describes the issue of IF far more thoroughly and lucidly than here, for those wishing for more information on this controversy.

The solution – if there is any – is not clear. There is broad cultural acceptance of IFs, and the metric is seductively simple to use. On the journal side, many publishers, such as eLife and the American Society of Microbiology, are de-emphasising IFs for their journals. Others such as Nature and PLoS are broadening the number of metrics at both journal and individual article level. As for researchers, academic institutions, and funding agencies (and local hospitals), gradually adopting some or all of DORA’s recommendations would be a start. Both ORCID and altmetrics are also interesting innovations for moving the conversation away from journal IFs.

[…] promised from a previous blog post. I have been involved directly or indirectly in several academic papers where there was […]

LikeLike